What actually happened with Facebook the other week?

Facebook, Instagram and Whatsapp were unavailable for almost 6 hours on the 4th of October. This was reported in News reports all over the world. It was arguably the longest outage hitting a social networking platform in recent years. This blog takes a look at how this outage unfolded. According to Facebook’s official incident report1, the outage was triggered by a routine maintenance activity that unintentionally took down connectivity between Facebook’s data centers which cascaded to removing Facebook’s domain name system (DNS) servers from the Internet.

Let’s try to unpack this. Datacenters are facilities that host servers, storage and networking equipment. A typical content provider, like for instance Facebook, has numerous data centers and replicates content and user’s data across them. These data centers are often connected via an internal backbone network that facilities moving data between them. A backbone network comprises computers that are called routers and the fiber links that connect them. The routers are responsible for figuring out where to deliver each data packet they receive. Content providers constantly improve their backbone networks to ensure a reliable and fast delivery of data to end users. However, these optimizations also increase complexity and hidden dependencies. In this case, the routine maintenance activity appears to have triggered unknown bugs that disconnected the entire backbone of Facebook. Next, the DNS servers realized the problem with the network and removed themselves from the Internet. DNS servers translate what we type in our browsers to IP addresses which are the numerical labels that are used to address machines on the Internet. Hence, without DNS servers no one will be able to access any web page unless they happen to know the corresponding IP addresses2.

But, how did the DNS servers remove themselves from the Internet? Networks in the Internet employ the border gateway routing protocol (BGP) to know how to reach other networks. BGP is the glue that binds the Internet together, it helps routers build a database of routes to other networks. Remember that a network is a collection of IP addresses, which are called network prefixes. BGP is very chatty since routers keep informing each other of network changes by sending messages called routing updates. What Facebook’s DNS servers did was to delete their addresses from BGP, a process known as route withdrawal. This happened because Facebook had implemented a resilience feature to disconnect DNS servers that cannot speak to its data centers from the Internet. This is a good design that would normally spread traffic across all the other data centers. This incident, however, took down the entire backbone of Facebook, which resulted in all Facebook’s DNS servers becoming unreachable. Unfortunately all tools that Facebook needed to fix the original issue with the network depended also on DNS, which meant that Facebook engineers had to have physical access to data centers to resolve the outage resulting in extra delays.

The outage as we saw it

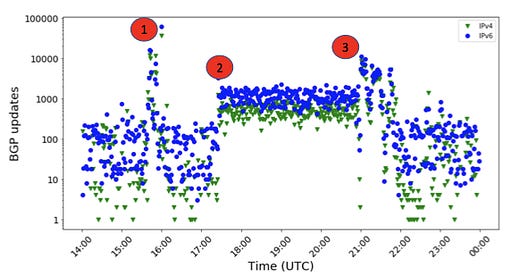

While we do not have insight into Facebook’s internal network, we can track potential connectivity issues to Facebook by observing BGP updates that are related to Facebook addresses. We used BGP update traces from over 188 routers, that are collected by RIPE RIS3. The figure below shows the number of routing updates as it changed during the outage for both IPv4 and IPv6, which are the two types of IP addresses in use today. The updates underwent three phases of sharp increase, which are labelled with red circles. The first phase coincided with the onset of the outage. This phase lasted for 29 minutes between 03:39 pm to 04:08 pm UTC. In this phase, many of Facebook’s network prefixes were withdrawn, some were re-advertised but those connected to DNS were not re-advertised. The second phase started over an hour later around 05:25 pm UTC. This phase involved BGP activity from one IPv4 prefix, 69.171.250.0/24, that was changing all the time. We also observed a limited number of IPv6 prefixes that were changing all the time. While we do not know why these prefixes became super active, this could be a first attempt by Facebook to resolve the outage, which apparently did not succeed. The last phase started at 09:00 pm and finished at 09:53 pm UTC. Here, Facebook resolved the problem.

Figure 1: BGP updates for Facebook’s prefixes

Impact on the stability of the Internet

An outage in one network should not affect the rest of the Internet unless that network is crucial to the relaying of data between networks. Facebook is indeed not such a network. However there are billions of devices that connect to Facebook. Whenever they connect, they send DNS resolution requests which are directed to Facebook’s DNS servers through a hierarchy of DNS servers. To avoid an explosion in the number of requests and to speed up the DNS resolution, DNS servers cache recent replies. But in this case there was nothing to cache, so requests went all the way to the top of the hierarchy, that is to the servers responsible for .com and those responsible for all domains, which are called root servers.

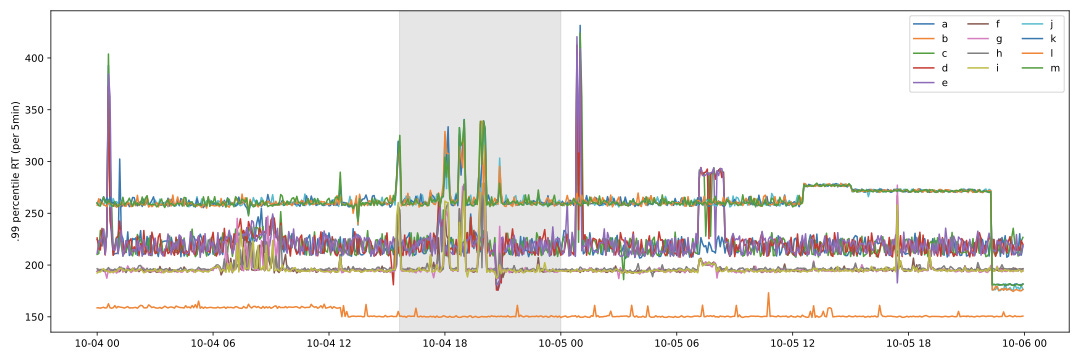

We looked at the response time for DNS queries4 that were sent to root and .com servers to check whether the outage had a tangible impact. More specifically, we looked at the worst 1% delays or the 99th percentile delay. We collected both IPv6 and IPv4 DNS measurements including TCP and UDP from October 4 to October 6. Our dataset is dominated by UDP measurements for both IP protocols. In addition, we observed that DNS response times using TCP were less stable. Compared to IPv4, IPv6 measurements were more inconsistent. For the rest of this blog, we focus our analysis on measurements using IPv4 and UDP.

Figure 2: root most affected servers

We looked at response time from 12 of the 13 root servers, leaving out the H instance (see Figure 2). The way the outage impacted the root varied from one server to another. The A, D, I and K exhibited spikes in delay during the outage. The D had the biggest impact with the 99th delay more than tripling from 190ms to 700ms. The negative impact, however, lasted briefly. More specifically, about 1% of the DNS queries that were sent during the Facebook’s outage exhibited an excessive delay. A closer look showed that all probes experienced a hike in delay when measuring to the three most affected servers, which indicates that most server instances were impacted. Recall that DNSMON measures from a bunch of RIPE Atlas probes to every DNS server, which means that these measurements are likely to reach different instances due to anycast. We note that the latency to the E root server dropped, which appeared to be a measurement artefact that is the total number of measurements to E were reduced sharply around the time of the drop.

We now turn to evaluating the impact of the servers responsible for the .com domain, since these will surely be consulted for facebook.com. The impact of the outage is observed on all instances (see Figure 3). The response time increase is clearly visible for all .com authoritative DNS servers except for b.gtld-servers.net. As for the root servers, the impact was global; all probes to each server saw an increase in delay. However, the magnitude of the impact was 4 times that on the root. Note that some of the servers serving different gTLDs share the same infrastructure, for instance Verisign operates both the .com and .net servers. We therefore checked whether the impact of the outage on DNS did leak to other gTLDs by examining the response of the .net servers (see Figure 4). Unfortunately, the negative impact on the .com spilled over to the .net.

Figure 3: .com most affected servers

Figure 4: .net most affected servers, similar to .com => share same infrastructure

Summary

In summary, the Facebook outage was clearly visible in the BGP data. The attempts to resolve it seem to have started an hour after its onset. While Facebook impressively managed to bring back its network without further cascades, the resolution time raises many questions about the need for incorporating self-healing systems that operate at a much shorter time scale than human operators. We also saw that the impact of the outage had cascaded to the DNS. Luckily, the DNS infrastructure seems to have absorbed the increase in load quite well. We, however, believe that the impact on DNS needs to be investigated in more depth, primarily to understand how similar effects can be minimized in the future.

https://engineering.fb.com/2021/10/05/networking-traffic/outage-details/

This would not have helped in this case because using Facebook involves sending numerous DNS resolutions - not just a single one to the main page.

We used BGPStream to download these traces